You can tune the following parameters to optimize the accuracy of identifying non-text areas: Remove areas identified as non-full text.This is done by filtering out sections using minimum and maximum black pixel concentration thresholds. For all horizontal segments that aren’t full text, identify the areas that are text vs.Split the document into horizontal stripes or segments to identify those that aren’t full text (extending across the whole page).Collapse the pixels horizontally to calculate the concentration of black pixels.You can follow the detailed steps in the following notebook. We can measure and analyze the pixel densities to identify areas with pixel densities that aren’t similar to the rest of document. Normal text paragraphs retain a concentration signature in its lines. We implement our second approach by analyzing the image pixels. If a block of text is inside large bounding boxes, the whole text block may be considered a visual and get removed using this logic.For visuals without a bounding box or surrounding edges, the performance may vary depending on the type of visuals.However, the approach has the following drawbacks:

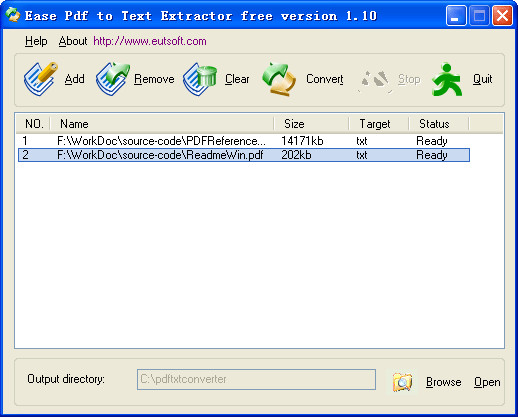

Its optimum parameters yield good results.It’s easy to implement, and quick to get up and running.This approach has the following advantages: This is helpful in cases where the texts in the visuals aren’t inside clearly delimited rectangular areas. Padding – When a rectangle contour is detected, we define the extra padding area to have some flexibility on the total area of the page to be redacted.It’s expressed in percentage of the page size. Minimum height and width – These parameters define the minimum height and width thresholds for visual detection.You can further tune and optimize a few parameters to increase detection accuracy depending on your use case: Identify the rectangular contours with relevant dimensions.Apply the Canny Edge algorithm to detect contours in the Canny-Edged document.In this approach, we convert the PDF into PNG format, then grayscale the document with the OpenCV-Python library and use the Canny Edge Detector to detect the visual locations. Option 1: Detecting visuals with OpenCV edge detector In this post, we focus on non-searchable or image-based documents.

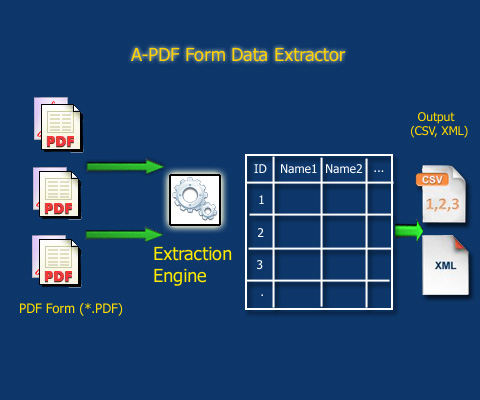

You can easily use libraries like PyMuPDF/fitz to navigate the PDF structure and identify images and text. These types of PDFs retain metadata, text, and image information inside the document. Searchable PDFs are native PDF files usually generated by other applications, such as text processors, virtual PDF printers, and native editors. You can extract these visuals out for further processing, and easily modify the code to fit your use case. For the second method, we write a custom pixel concentration analyzer to detect the location of these visuals. In the first method, we use the OpenCV library canny edge detector to detect the edge of the visuals. We use two different types of processes to convert and detect these visuals, then redact them. Solution overviewįor this post, we use a PDF that contains a logo and a chart as an example. In this post, we illustrate two effective methods to remove these visuals as part of your preprocessing. This information isn’t needed in downstream processes, and you have to remove it before using Amazon Textract to analyze the document. For example, many real estate evaluation forms or documents contain pictures of houses or trends of historical prices. These visuals contain embedded text that convolutes Amazon Textract output or isn’t required for your downstream process. In many use cases, you need to extract and analyze documents with various visuals, such as logos, photos, and charts. Amazon Textract can detect text in a variety of documents, including financial reports, medical records, and tax forms. Amazon Textract is a fully managed machine learning (ML) service that automatically extracts printed text, handwriting, and other data from scanned documents that goes beyond simple optical character recognition (OCR) to identify, understand, and extract data from forms and tables.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed